Scrapling: The Web Scraping Backbone AI Agents Have Been Missing

AI agents like OpenClaw need real web data to act on. The bottleneck has never been the LLM—it's getting clean, structured data from the live web without bot blocks, broken selectors, or Cloudflare intercept pages. Scrapling changes that. It's the scraping backbone that makes agents actually useful.

Last updated: February 24, 2026.

Scrapling is an adaptive web scraping framework for Python. It handles everything from a single request to a full-scale crawl. The parser learns from website changes and relocates elements when pages update. The fetchers bypass anti-bot systems like Cloudflare Turnstile out of the box. For AI agents that tell a scraper what to extract, Scrapling handles the stealth. Clean data lands in your agent in seconds. No maintenance hell, no detection wars.

Why AI Agents Need This

Agents that can read email, run scripts, and control browsers are powerful. But if they can't reliably pull data from arbitrary websites, they're limited to APIs and static content. Real workflows—price monitoring, competitor research, lead enrichment, news aggregation—require scraping. Traditional scrapers break when:

- Bot detection blocks the request or serves a challenge page.

- Site structure changes and your CSS/XPath selectors return nothing.

- Cloudflare Turnstile or similar captcha-style checks block headless browsers.

Scrapling addresses all three. You describe what to extract; it handles the rest. For OpenClaw and similar agents, that means one less moving part to maintain and one more source of real-world data.

What Scrapling Does

Stealthy fetching. HTTP requests that impersonate real browsers (TLS fingerprint, headers). Dynamic sites via Playwright/Chromium with anti-bot bypass. Session support so cookies and state persist across requests. Proxy rotation built in.

Adaptive parsing. Selectors that survive design changes. The library can relocate elements when the DOM changes using similarity algorithms. You can enable adaptive=True on selectors so that when the site updates, Scrapling finds the same logical elements instead of failing.

AI-ready. pip install "scrapling[ai]" installs the MCP server. Your AI (Claude, Cursor, etc.) can use Scrapling to extract targeted content before processing it—reducing tokens and cost and speeding up operations. The MCP server does the heavy lifting; the model gets clean snippets.

By the Numbers

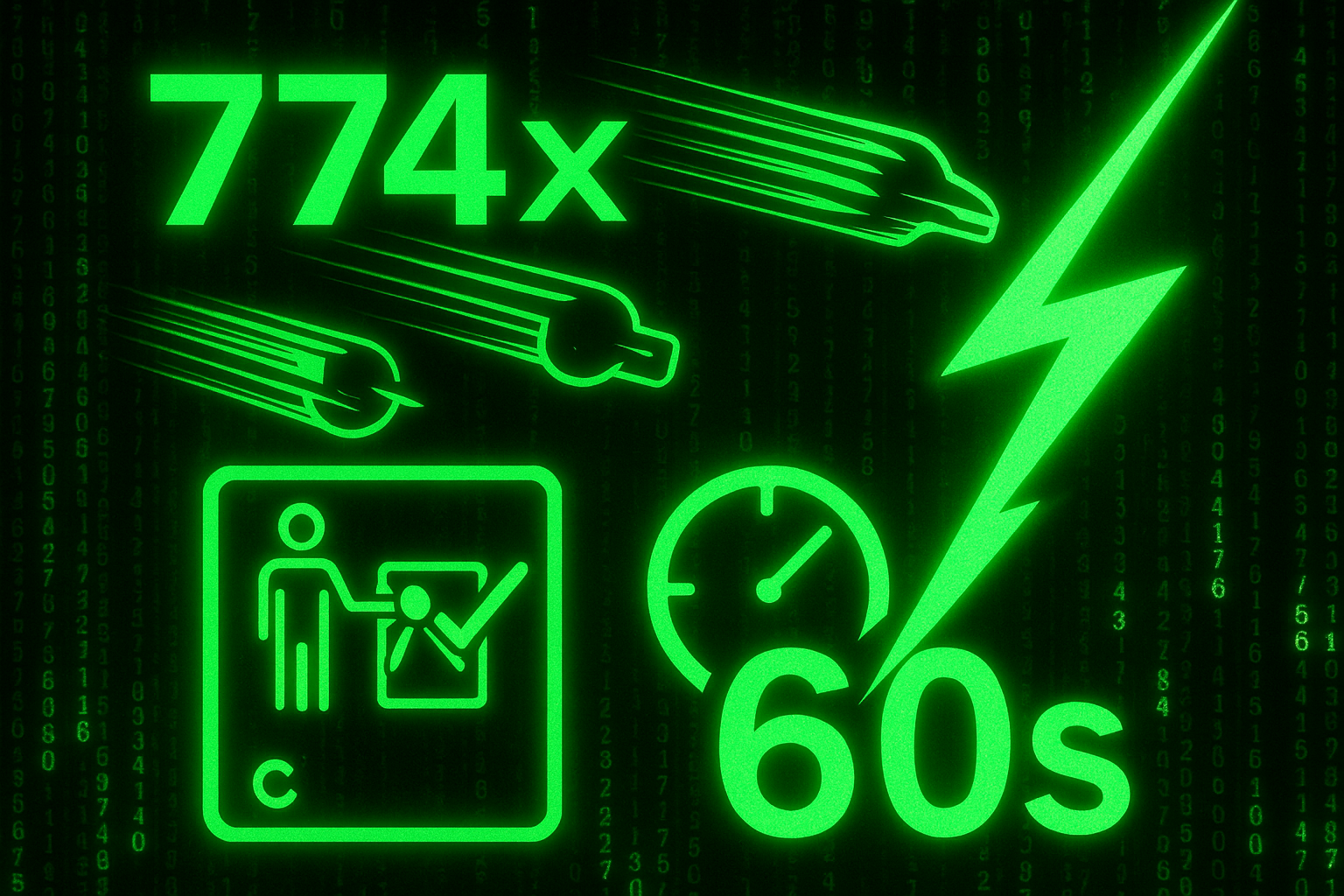

- 774× faster than BeautifulSoup with Lxml in benchmarks (see Scrapling docs).

- Bypasses all types of Cloudflare Turnstile with the stealthy fetcher and browser automation.

- 60 seconds to first scrape with

pip install "scrapling[ai]"and a few lines of code.

So you're not trading speed for stealth. You get both.

How It Fits Your Stack

Scrapling works across the spectrum:

- HTTP + browser automation — Use fast HTTP when possible; drop into a real browser when the site demands it.

- CSS, XPath, text, regex selectors — Same API you're used to from Scrapy/Parsel/BeautifulSoup.

- Async sessions — Parallel scraping with configurable concurrency and per-domain throttling.

- CLI with zero code — Extract from a URL from the terminal without writing Python.

If you're building AI agents that need real web data, this is the scraping backbone that was missing. OpenClaw can tell Scrapling what to extract; Scrapling handles the stealth and structure. You get clean data, fewer breakages, and no Cloudflare nightmares.

Open Source and License

Scrapling is 100% open source under the BSD-3 license. No vendor lock-in, no usage caps. Documentation and examples are at scrapling.readthedocs.io. The project is actively maintained with a focus on web scrapers and modern anti-bot environments.

Quick Start

Install with AI/MCP support:

pip install "scrapling[ai]"

scrapling install # downloads browsers and depsThen use the stealthy fetcher and adaptive selectors:

from scrapling.fetchers import StealthyFetcher

StealthyFetcher.adaptive = True

page = StealthyFetcher.fetch('https://example.com', headless=True, network_idle=True)

products = page.css('.product', auto_save=True)

# Or after a site redesign: products = page.css('.product', adaptive=True)For full crawls, Scrapling offers a Scrapy-like spider API with start_urls, async parse, and streaming results. Check the official docs for spiders, MCP server setup, and advanced options.

Conclusion

AI agents that can act on the web need a scraping layer that doesn't break when sites change or when anti-bot systems kick in. Scrapling provides that: stealthy fetching, adaptive selectors, and an MCP server so your agent can pull exactly what it needs. If you're building agents that need real web data—OpenClaw or otherwise—Scrapling is the backbone worth adding. One library, zero compromises.